Agentic AI Becomes Your Engineering Runtime: PDCVR, Agents, DevScribe

Published Jan 3, 2026

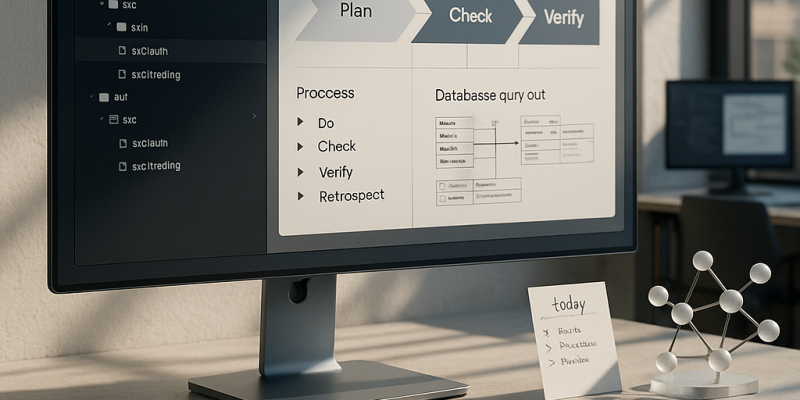

Worried your teams will waste weeks while competitors treat AI as a runtime, not a toy? In the last two weeks (Jan 2–3, 2026) engineering communities converged on a clear AI‐native operating model you can use now: a Plan–Do–Check–Verify–Retrospect (PDCVR) loop (used with Claude Code + GLM‐4.7) that turns LLMs into fast, reviewable junior devs; folder‐level instruction manifests plus a meta‐agent that rewrites short human prompts into thorough tasks (reducing a typical 1–2 day ticket from ~8 hours to ~2–3 hours); DevScribe‐style executable workspaces for local DB/API/diagram execution; explicit data‐migration/backfill platforms; and “alignment tax” agents that watch scope and dependencies. Why it matters: this shifts where you get advantage—from model choice to how you design and run the operating model—and these patterns are already becoming standard in fintech/trading and safety‐critical stacks.

AI Becomes the Engineering Runtime: PDCVR, Agent Stacks, Executable Workspaces

Published Jan 3, 2026

Still losing hours to rework and scope creep? New practitioner threads (Jan 2–3, 2026) show AI shifting from ad‐hoc copilots to an AI‐native operating model—and here’s what to act on. A senior engineer published a production‐tested PDCVR loop (Plan‐Do‐Check‐Verify‐Retrospect) using Claude Code and GLM‐4.7 and shared prompts and subagent patterns on GitHub; it turns TDD and PDCA ideas into a model‐agnostic SDLC shell that risk teams in fintech/biotech/critical infra can accept. Teams report layered agent stacks with folder‐level manifests plus a meta‐agent cut routine 1–2 day tasks from ~8 hours to ~2–3 hours. DevScribe surfaces executable workspaces (databases, diagrams, API testing, offline‐first). Data backfills are being formalized into PDCVR flows. Alignment tax and scope creep are now measurable via agents watching Jira/Linear/RFC diffs. Immediate takeaway: pilot PDCVR, folder priors, agent topology, and an executable cockpit; expect AI to become engineering infrastructure over the next 12–24 months.

Inside the AI-Native OS Engineers Use to Ship Software Faster

Published Jan 3, 2026

What if you could cut typical 1–2‐day engineering tasks from ~8 hours to ~2–3 while keeping quality and traceability? Over the last two weeks (Reddit posts 2026‐01‐02/03), experienced engineers have converged on practical patterns that form an AI‐native operating model you'll get here: the PDCVR loop (Plan–Do‐Check‐Verify‐Retrospect) enforcing test‐first plans and sub‐agents (Claude Code) for verification; folder‐level manifests plus a meta‐agent that rewrites prompts to respect architecture; DevScribe‐style executable workspaces that pair schemas, queries, diagrams and APIs; treating data backfills as idempotent platform workflows; coordination agents that quantify the “alignment tax”; and AI todo routers consolidating Slack/Jira/Sentry into prioritized work. Together these raise throughput, preserve traceability and safety for sensitive domains like fintech/biotech, and shift migrations and scope control from heroic one‐offs to platform responsibilities. Immediate moves: adopt PDCVR, add folder priors, build agent hierarchies, and pilot an executable workspace.

How AI Is Rewiring Software Engineering: PDCVR, Agents, Executable Workspaces

Published Jan 3, 2026

What if a typical 1–2 day engineering task drops from ~8 hours to ~2–3 hours? In the last two weeks practitioners (Reddit threads dated Jan 2–3, 2026) showed how: an AI‐native SDLC loop called PDCVR (Plan‐Do‐Check‐Verify‐Retrospect) built on Claude Code and GLM‐4.7, folder‐level priors plus a prompt‐rewriting meta‐agent, executable workspaces like DevScribe, repeatable data‐migration/backfill patterns, and tools to surface the “alignment tax.” PDCVR forces repo scans, TDD plans, small diffs, sub‐agents (open‐sourced in .claude on GitHub, Jan 3, 2026) to run builds/tests, and LLM retrospectives. Measured gains: common fixes go from ~8 hours to ~2–3 hours with 20‐minute prompts and short PR loops. Bottom line: teams in fintech, healthtech, trading and regulated sectors should adopt these operating models—PDCVR, multi‐level agents, executable docs, migration frameworks—and tie them to speed, quality, and risk metrics.

Inside the AI Operating Fabric Transforming Engineering: PDCVR, Agents, Workspaces

Published Jan 3, 2026

Losing time to scope creep and brittle AI output? In the past two weeks engineers documented concrete practices showing AI is becoming the operating fabric of engineering work: PDCVR (Plan–Do–Check–Verify–Retrospect) — documented 2026‐01‐03 for Claude Code and GLM‐4.7 with GitHub prompt templates — gives an AI‐native SDLC wrapper; multi‐agent hierarchies (folder‐level instructions plus a prompt‐rewriting meta‐agent) cut typical 1–2 day monorepo tasks from ~8 hours to ~2–3 hours (reported 2026‐01‐02); DevScribe (2026‐01‐03) offers executable docs (DB queries, diagrams, REST client, offline‐first); engineers pushed reusable data backfill/migration patterns (2026‐01‐02); posts flagged an “alignment tax” on throughput (2026‐01‐02/03); and founders prototyped AI todo routers aggregating Slack/Jira/Sentry (2026‐01‐02). Immediate takeaway: implement PDCVR‐style loops, agent hierarchies, executable workspaces and alignment‐aware infra — and measure impact.

AI as Engineer: From Autocomplete to Process-Aware Collaborator

Published Jan 3, 2026

Your team’s code is fast but fragile — in the last two weeks engineers, not vendors, published practical patterns to make LLMs safe and productive. On 2026‐01‐03 a senior engineer released PDCVR (Plan‐Do‐Check‐Verify‐Retrospect) using Claude Code and GLM‐4.7 with prompts and sub‐agents on GitHub; it embeds planning, TDD, build verification, and retrospectives as an AI‐native SDLC layer for risk‐sensitive systems. On 2026‐01‐02 others showed folder‐level repo manifests plus a prompt‐rewriting meta‐agent that cut routine 1–2‐day tasks from ~8 hours to ~2–3 hours. Tooling shifted too: DevScribe (site checked 2026‐01‐03) offers executable, offline docs with DBs, diagrams, and API testing. Engineers also pushed reusable data‐migration patterns, highlighted the “alignment tax,” and prototyped Slack/Jira/Sentry aggregators. Bottom line: treat AI as a process participant — build frameworks, guardrails, and observability now.

AI Is Becoming the Operating System for Software Teams

Published Jan 3, 2026

Drowning in misaligned work and slow delivery? In the last two weeks senior engineers sketched exactly what’s changing and why it matters: AI is becoming an operating system for software teams, and this summary tells you what to expect and do. Teams are shifting from ad‐hoc prompting to repeatable, auditable frameworks like Plan–Do–Check–Verify–Retrospect (PDCVR) (implemented on Claude Code + GLM‐4.7; prompts and sub‐agents open‐sourced, Reddit 2026‐01‐03), cutting error loops with TDD and build‐verification agents. Hierarchical agents plus folder manifests trim a task from ~8 hours to ~2–3 hours (20‐minute prompt, 2–3 feedback loops, ~1 hour testing). Tools like DevScribe collapse docs, queries, diagrams, and API tests into executable workspaces. Data backfills need platform controllers with checkpointing and rollforward/rollback. The biggest ops win: alignment‐aware dashboards and AI todo aggregators to expose scope creep and speed decisions. Immediate takeaway: harden workflows, add agent tiers, and invest in alignment tooling now.

PDCVR and Agentic Workflows Industrialize AI‐Assisted Software Engineering

Published Jan 3, 2026

If your team is losing a day to routine code changes, listen: Reddit posts from 2026‐01‐02/03 show practitioners cutting typical 1–2‐day tasks from ~8 hours to about 2–3 hours by combining a Plan–Do–Check–Verify–Retrospect (PDCVR) loop with multi‐level agents, and this summary tells you what they did and why it matters. PDCVR (reported 2026‐01‐03) runs in Claude Code with GLM‐4.7, forces RED→GREEN TDD in planning, keeps small diffs, uses build‐verification and role subagents (.claude/agents) and records lessons learned. Separate posts (2026‐01‐02) show folder‐level instructions and a prompt‐rewriting meta‐agent turning vague requests into high‐fidelity prompts, giving ~20 minutes to start, 10–15 minutes per PR loop, plus ~1 hour for testing. Tools like DevScribe make docs executable (DB queries, ERDs, API tests). Bottom line: teams are industrializing AI‐assisted engineering; your immediate next step is to instrument reproducible evals—PR time, defect rates, rollbacks—and correlate them with AI use.

Meet the AI Agents That Build, Test, and Ship Your Code

Published Dec 6, 2025

Tired of bloated “vibe-coded” PRs? Here’s what you’ll get: the change, why it matters, and immediate actions. Over the past two weeks multiple launches and previews showed AI-native coding agents moving out of the IDE into the full software delivery lifecycle—planning, implementing, testing and iterating across entire repositories (often indexed at millions of tokens). These agentic dev environments integrate with test runners, linters and CI, run multi-agent workflows (planner, coder, tester, reviewer), and close the loop from intent to a pull request. That matters because teams can accelerate prototype-to-production cycles but must manage costs, latency and trust: expect hybrid or self-hosted models, strict zoning (green/yellow/red), test-first workflows, telemetry and governance (permissions, logs, policy). Immediate steps: make codebases agent-friendly, require staged approvals for critical systems, build prompt/pattern libraries, and treat agents as production services to monitor and re-evaluate.

AI-Native Trading: Models, Simulators, and Agentic Execution Take Over

Published Dec 6, 2025

Worried you’ll be outpaced by AI-native trading stacks? Read this and you’ll know what changed and what to do. In the past two weeks industry moves and research have fused large generative models, high‐performance market simulation, and low‐latency execution: NVIDIA says over 50% of new H100/H200 cluster deals in financial services list trading and generative AI as primary workloads (NVIDIA, 2025‐11), and cloud providers updated GPU stacks in 2025‐11–2025‐12. New tools can generate tens of thousands of synthetic years of limit‐order‐book data on one GPU, train RL agents against co‐evolving adversaries, and oversample crisis scenarios—shifting training from historical backtests to simulated multiverses. That raises real risks (opaque RL policies, strategy monoculture from LLM‐assisted coding, data leakage). Immediate actions: inventory generative dependencies, segregate research vs production models, enforce access controls, use sandboxed shadow mode, and monitor GPU usage, simulator open‐sourcing, and AI‐linked market anomalies over the next 6–12 months.