AI Embeds Everywhere: Agentic Workflows, On‐Device Inference, Enterprise Tooling

Published Jan 4, 2026

Still juggling tool sprawl and model hype? In the last two weeks (Dec 19–Jan 3) major vendors shifted focus from one‐off models to systems you’ll have to integrate: OpenAI expanded Deep Research (Dec 19) to run multi‐hour agentic research runs; Qualcomm benchmarked Snapdragon NPUs at 75+ TOPS (Dec 23) as Google and Apple pushed on‐device inference; Meta and Mistral published distillation recipes (Dec 26–29) to compress 70B models into 8–13B variants for on‐prem use; observability tools (Arize, W&B, LangSmith) added agent traces and evals (Dec 23–29); quantum vendors realigned to logical‐qubit roadmaps (IBM et al., Dec 22–29); and biotech firms (Insilico, Recursion) reported AI‐driven pipelines and 30 PB of imaging data (Dec 26–27). Why it matters: expect hybrid cloud/device stacks, tighter governance, lower inference cost, and new platform engineering priorities—start mapping model, hardware, and observability paths now.

Agentic AI Is Taking Over Engineering: From Code to Incidents and Databases

Published Jan 4, 2026

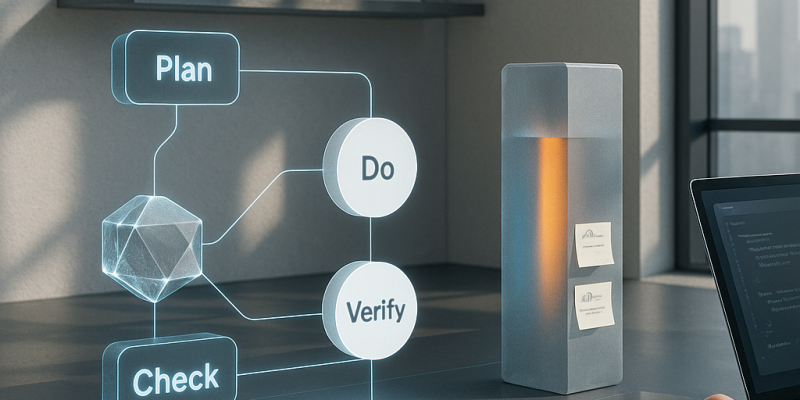

If messy backfills, one-off prod fixes, and overflowing tickets keep you up, here’s what changed in the last two weeks and what to do next. Vendors and OSS shipped agentic, multi-agent coding features late Dec (Anthropic 2025-12-23; Cursor, Windsurf; AutoGen 0.4 on 2025-12-22; LangGraph 0.2 on 2025-12-21) so LLMs can plan, implement, test, and iterate across repos. On-device moves accelerated (Apple Private Cloud Compute update 2025-12-26; Qualcomm/MediaTek benchmarks mid‐Dec), making private, low-latency assistants practical. Data and migration tooling added LLM helpers (Snowflake Dynamic Tables 2025-12-23; Databricks Delta Live Tables 2025-12-21) but expect humans to own a PDCVR loop (Plan, Do, Check, Verify, Rollback). Database change management and just‐in‐time audited access got product updates (PlanetScale/Neon, Liquibase, Flyway, Teleport, StrongDM in Dec). Action: adopt agentic workflows cautiously, run AI drafts through your PDCVR and PR/audit gates, and prioritize on‐device options for sensitive code.

From PDCVR to Agent Stacks: Inside the AI Native Engineering Operating Model

Published Jan 3, 2026

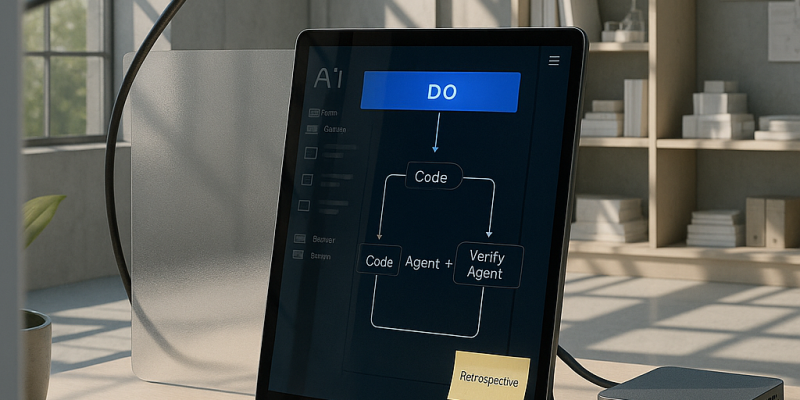

Losing engineer hours to scope creep and brittle AI hacks? Between Jan 2–3, 2026 practitioners published concrete patterns showing AI is being industrialized into an operating model you can copy. You get a PDCVR loop (Plan–Do–Check–Verify–Retrospect) around LLM coding, repo‐governed, model‐agnostic checks, and Claude Code sub‐agents for build and test; a three‐tier agent stack with folder‐level manifests and a prompt‐rewriting meta‐agent that cut typical 1–2 day tickets from ≈8 hours to ≈2–3 hours; DevScribe‐style offline workspaces that co‐host code, schemas, queries, diagrams and API tests; standardized, idempotent backfill patterns for auditable migrations; and “coordination‐aware” agents to measure the alignment tax. If you want short‐term productivity and auditable risk controls, start piloting PDCVR, repo policies, an executable workspace, and migration primitives now.

From PDCVR to Agent Stacks: The AI‐Native Engineering Blueprint

Published Jan 3, 2026

Been burned by buggy AI code or chaotic agents? Over the past 14 days, practitioners sketched an AI‐native operating model you can use as a blueprint. A senior engineer (2026‐01‐03) formalized PDCVR—Plan, Do, Check, Verify, Retrospect—using Claude Code with GLM‐4.7 to enforce TDD, small scoped loops, agented verification, and recorded retrospectives. Another thread (2026‐01‐02) shows multi‐level agent stacks: folder‐level manifests plus a meta‐agent that turns short prompts into executable specs, cutting typical 1–2 day tasks from ~8 hours to ≈2–3 hours. DevScribe (docs 2026‐01‐03) offers an offline, executable workspace for code, queries, diagrams and tests. Teams also frame data backfills as platform work (2026‐01‐02) and treat coordination drag as an “alignment tax” to be monitored by sentry agents (2026‐01‐02–03). The immediate question isn’t “use agents?” but “which operating model and metrics will you embed?”

AI Becomes an Operating Layer: PDCVR, Agents, and Executable Workspaces

Published Jan 3, 2026

You’re losing hours to coordination and rework: over the last 14 days practitioners (posts dated 2026‐01‐02/03) showed how AI is shifting from a tool to an operating layer that cuts typical 1–2 day tickets from ~8 hours to ~2–3 hours. Read on and you’ll get the concrete patterns to act on: a published Plan–Do–Check–Verify–Retrospect (PDCVR) workflow (GitHub, 2026‐01‐03) that embeds tests, multi‐agent verification, and retrospects into the SDLC; folder‐level manifests plus a prompt‐rewriting meta‐agent that preserve architecture and speed execution; DevScribe‐style executable workspaces for local DB/API runs and diagrams; structured AI‐assisted data backfills; and “alignment tax” monitoring agents to surface coordination risk. For your org, the next steps are clear: pick an operating model, pilot PDCVR and folder policies in a high‐risk stack (fintech/digital‐health), and instrument alignment metrics.

AI‐Native Operating Models: PDCVR, Agent Stacks, and Executable Workspaces

Published Jan 3, 2026

Burning hours on fragile code, migrations, and alignment? In the last two weeks (posts dated 2026‐01‐02/03), practitioners sketched public blueprints showing how LLMs and agents are being embedded into real engineering work—what you’ll get here is the patterns to adopt. Engineers describe a Plan–Do–Check–Verify–Retrospect (PDCVR) loop (Claude Code, GLM‐4.7) that wraps codegen in governance and TDD; multi‐level agent stacks plus folder‐level manifests that make repos act as soft policy engines; and a meta‐agent flow that cut typical 1–2 day tasks from ~8 hours to ~2–3 hours (20‐minute prompt, 2–3 short loops, ~1 hour testing). DevScribe‐style executable workspaces, governed data‐backfill workflows, and coordination‐aware agents complete the model. Why it matters: faster delivery, clearer risk controls, and measurable “alignment tax” for regulated fintech, trading, and health teams. Immediate takeaway: start piloting PDCVR, folder policies, executable workspaces, and coordination agents.

AI‐Native Operating Models: How Agents Are Rewriting Engineering Workflows

Published Jan 3, 2026

Struggling with slow, risky engineering work? In the past 14 days (posts dated Jan 2–3, 2026) practitioners published concrete frameworks showing AI moving from toy to governed teammate—what you get here are practical primitives you can act on now. They surfaced PDCVR (Plan–Do–Check–Verify–Retrospect) as a daily, test‐driven loop for AI code, folder‐level manifests plus a prompt‐rewriting meta‐agent to keep agents aligned with architecture, and measurable wins (typical 1–2 day tasks fell from ~8 hours to ~2–3 hours). They compared executable workspaces (DevScribe) that bundle DB connectors, diagrams, and offline execution, outlined AI‐assisted, idempotent backfill patterns crucial for fintech/trading/health, and named “alignment tax” as a coordination problem agents can monitor. Bottom line: this isn’t just model choice anymore—it’s an operating‐model design problem; expect teams to adopt PDCVR, folder policies, and coordination agents next.

How Agentic AI Became an Engineering OS: PDCVR, Meta‐Agents, DevScribe

Published Jan 3, 2026

What if a routine 1–2 day engineering task that used to take ~8 hours now takes ≈2–3 hours? Over the last 14 days (posts dated 2026‐01‐02 and 2026‐01‐03), engineers report agentic AI entering a second phase: teams are formalizing an AI‐native operating model around PDCVR (Plan–Do–Check–Verify–Retrospect) using Claude Code and GLM‐4.7, stacking meta‐agents + coding agents constrained by folder‐level manifests, and running work in executable DevScribe‐style workspaces. That matters because it turns AI into a controllable collaborator for high‐stakes domains—fintech, trading, digital‐health—speeding delivery, enforcing invariants, enabling tested migrations, and surfacing an “alignment tax” of coordination overhead. Key actions shown: institute PDCVR loops, add repo‐level policies, deploy meta‐agents and VERIFY agents, and instrument alignment to manage risk as AI moves from experiment to production.

AI Rewrites Engineering: From Autocomplete to Operating System

Published Jan 3, 2026

Engineers are reporting a productivity and governance breakthrough: in the last 14 days (posts dated 2026‐01‐02/03) practitioners described a repeatable blueprint—PDCVR (Plan–Do–Check–Verify–Retrospect), folder‐level policies, meta‐agents, and execution workspaces like DevScribe—that moves LLMs and agents from “autocomplete” to an engineering operating model. You get concrete wins: open‐sourced PDCVR prompts and Claude Code agents on GitHub (2026‐01‐03), Plan+TDD discipline, folder manifests that prevent architectural drift, and a meta‐agent that cuts a typical 1–2 day ticket from ≈8 hours to ~2–3 hours. Teams also framed data backfills as governed workflows and named “alignment tax” as a coordination problem agents can monitor. If you care about velocity, risk, or compliance in fintech/trading/digital‐health, the immediate takeaway is clear: treat AI as an architectural question—adopt PDCVR, folder priors, executable docs, governed backfills, and alignment‐watching agents.

How AI Became the Governed Worker Powering Modern Engineering Workflows

Published Jan 3, 2026

Teams are turning AI from an oracle into a governed worker—cutting typical 1–2 day, ~8‐hour tickets to about 2–3 hours—by formalizing workflows and agent stacks. Over Jan 2–3, 2026 practitioners documented a Plan–Do–Check–Verify–Retrospect (PDCVR) loop that makes LLMs produce stepwise plans, RED→GREEN tests, self‐audits, and uses clustered Claude Code sub‐agents to run builds and verification. Folder‐level manifests plus a meta‐agent rewrite short prompts into file‐specific instructions, reducing architecture‐breaking edits and speeding throughput (≈20 minutes to craft the prompt, 2–3 short feedback loops, ~1 hour manual testing). DevScribe‐style workspaces let agents execute queries, tests and view schemas offline. The same patterns apply to data backfills and to lowering the measurable “alignment tax” by surfacing dependencies and missing reviewers. Bottom line: your advantage will come from designing the system that bounds and measures AI, not just picking a model.