How AI Became Your Colleague: The New AI-Native Engineering Playbook

Published Jan 3, 2026

If your teams are losing days to rework, pay attention: over Jan 2–3, 2026 engineers shared concrete practices that make AI a predictable, auditable colleague. You get a compact playbook: PDCVR (Plan–Do–Check–Verify–Retrospect) for Claude Code and GLM‐4.7—plan with RED→GREEN TDD, have the model write failing tests and iterate, run completeness checks, use Claude Code sub‐agents to run builds/tests, and log lessons (GitHub templates published 2026‐01‐03). Paired with folder‐level specs and a prompt‐rewriting meta‐agent, 1–2 day tasks fell from ~8 hours to ~2–3 hours (20‐min prompt + a few 10–15 min loops + ~1 hour testing) (Reddit, 2026‐01‐02). DevScribe‐style executable, offline workspaces, reusable migration/backfill frameworks, alignment‐monitoring agents, and AI “todo routers” complete the stack. Bottom line: adopt PDCVR, agent hierarchies, and executable workspaces to cut cycle time and make AI collaboration auditable—and start by piloting these patterns in safety‐sensitive flows.

AI Is Becoming the Operating System for Software Teams

Published Jan 3, 2026

Drowning in misaligned work and slow delivery? In the last two weeks senior engineers sketched exactly what’s changing and why it matters: AI is becoming an operating system for software teams, and this summary tells you what to expect and do. Teams are shifting from ad‐hoc prompting to repeatable, auditable frameworks like Plan–Do–Check–Verify–Retrospect (PDCVR) (implemented on Claude Code + GLM‐4.7; prompts and sub‐agents open‐sourced, Reddit 2026‐01‐03), cutting error loops with TDD and build‐verification agents. Hierarchical agents plus folder manifests trim a task from ~8 hours to ~2–3 hours (20‐minute prompt, 2–3 feedback loops, ~1 hour testing). Tools like DevScribe collapse docs, queries, diagrams, and API tests into executable workspaces. Data backfills need platform controllers with checkpointing and rollforward/rollback. The biggest ops win: alignment‐aware dashboards and AI todo aggregators to expose scope creep and speed decisions. Immediate takeaway: harden workflows, add agent tiers, and invest in alignment tooling now.

Inside PDCVR: How Agentic AI Boosts Engineering 3–4×

Published Jan 3, 2026

Tired of slow, error‐prone engineering cycles? Read on: posts from Jan 2–3, 2026 show senior engineers are codifying agentic coding into a Plan–Do–Check–Verify–Retrospect (PDCVR) workflow—Plan (repo inspection and explicit TDD), Do (tests first, small diffs), Check (compare plan vs. code), Verify (Claude Code sub‐agents run builds/tests), Retrospect (capture mistakes to seed the next plan)—with prompts and agent configs on GitHub. Multi‐level agents (folder‐level manifests plus a prompt‐rewriting meta‐agent) report 3–4× day‐to‐day gains: typical 1–2 day tasks dropped from ~8 hours to ~2–3 hours. DevScribe appears as an executable, local‐first workspace (DB integration, diagrams, API testing). Data migration, the “alignment tax,” and AI todo aggregators are flagged as platform priorities. Teams that internalize these workflows and tools will define the next phase of AI in engineering.

How GPU Memory Virtualization Is Breaking AI's Biggest Bottleneck

Published Dec 6, 2025

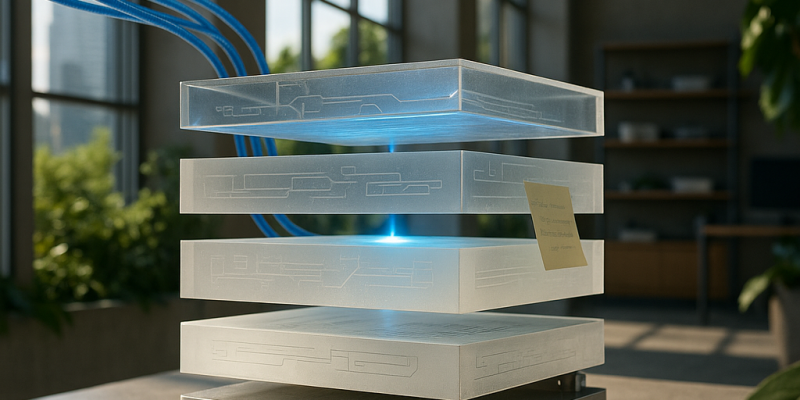

In the last two weeks GPU memory virtualization and disaggregation moved from infra curiosity to a rapid, production trend—because models and simulations increasingly need tens to hundreds of gigabytes of VRAM. Read this and you'll know what's changing, why it matters to your AI, quant, or biotech workloads, and what to do next. The core idea: software‐defined pooled VRAM—virtualized memory, disaggregated pools, and communication‐optimized tensor parallelism—makes many smaller GPUs look like one big memory space. That means you can train larger or more specialist models, host denser agentic workloads, and run bigger Monte Carlo or molecular simulations without buying a new fleet. Tradeoffs: paging latency, new failure modes, and security/isolation risks. Immediate steps: profile memory footprints, adopt GPU‐aware orchestration, refactor for sharding/checkpointing, and plan hybrid hardware generations.

The Shift to Domain‐Specific Foundation Models Every Tech Leader Must Know

Published Dec 6, 2025

If your teams still bet on generic LLMs, you're facing diminishing returns — over the last two weeks the industry has accelerated toward enterprise‐grade, domain‐specific foundation models. You’ll get why this matters, what these stacks look like, and what to watch next. Three forces drove the shift: generic models stumble on niche terminology and protocol rules; high‐quality domain datasets have matured over the last 2–3 years; and tooling for safe adaptation (secure connectors, parameter‐efficient tuning like LoRA/QLoRA, retrieval, and domain evals) is now enterprise ready. Practically, stacks layer a base foundation model, domain pretraining/adaptation, retrieval/tools (backtests, lab instruments, CI), and guardrails. Impact: better correctness, calibrated outputs, and tighter integration into trading, biotech, and engineering workflows — but watch data bias, IP leakage, and regulatory guardrails. Immediate signs to monitor: vendor domain‐tuning blueprints, open‐weight domain models, and platform tooling that treats adaptation and eval as first‐class.

From Chatbots to Core: LLMs Become Dev Infrastructure

Published Dec 6, 2025

If your teams are still copy‐pasting chatbot output into editors, you’re living the “vibe coding” pain—massive, hard‐to‐audit diffs and hidden logic changes have pushed many orgs to rethink workflows. Here’s what happened in the last two weeks and what it means for you: engineers are treating LLMs as first‐class infrastructure—repo‐aware agents that index code, tests, configs and open contextual PRs; AI running in CI to review code, generate tests, and gate large PRs; and AI copilots parsing logs and drafting postmortems. That shift boosts productivity but raises real risk in fintech, trading, biotech (e.g., pandas→polars rewrites, pre‐trade check drift). Immediate responses: zone repos (green/yellow/red), log every AI action, and enforce policy engines (on‐prem/VPC for sensitive code). Watch for platform announcements and practitioner case studies to track adoption.

Agentic AI Is Going Pro: Semi‐Autonomous Teams That Ship Code

Published Dec 6, 2025

Burnout from rote engineering tasks is real—and agentic AI is now positioned to change that. Here’s what happened and why you should care: over the last two weeks (and increasingly since early 2025) agent frameworks and AI‐native workflows have matured so models can plan, act through tools, and coordinate—producing multi‐step outcomes (PRs, reports, backtests) rather than single snippets. Teams are using planner, executor, and critic agents to do multi‐file refactors, incident triage, experiment orchestration, and trading research. That matters because it can compress delivery cycles, raise research throughput, and cut time‐to‐insight—if you govern it. Immediate implications: zone autonomy (green/yellow/red), sandbox execution for trading, enforce tool catalogs and observability/audit logs, and prioritize people who can design and supervise these systems; organizations that do this will gain the edge.

From Giant LLMs to Micro‐AI Fleets: The Distillation Revolution

Published Dec 6, 2025

Paying multi‐million‐dollar annual run‐rates to call giant models? Over the last 14 days the field has accelerated toward systematically distilling big models into compact specialists you can run cheaply on commodity hardware or on‐device, and this summary shows what’s changed and what to do. Recent preprints (2025‐10 to 2025‐12) and reproductions show 1–7B‐parameter students matching teachers on narrow domains while using 4–10× less memory and often 2–5× faster with under 5–10% loss; FinOps reports (through 2025‐11) flag multi‐million‐dollar inference costs; OEM benchmarks show sub‐3B models can hit interactive latency on devices with tens–low‐hundreds TOPS NPUs. Why it matters: lower cost, better latency, and privacy transform trading, biotech, and dev tools. Immediate moves: define task constraints (latency <50–100 ms, memory <1–2 GB), build distillation pipelines, centralize registries, and enforce monitoring/MBOMs.

Multimodal AI Is Becoming the Universal Interface for Complex Workflows

Published Dec 6, 2025

If you’re tired of stitching OCR, ASR, vision models, and LLMs together, pay attention: in the last 14 days major providers pushed multimodal APIs and products into broad preview or GA, turning “nice demos” into a default interface layer. You’ll get models that accept text, images, diagrams, code, audio, and video in one call and return text, structured outputs (JSON/function calls), or tool actions — cutting brittle pipelines for engineers, quants, fintech teams, biotech labs, and creatives. Key wins: cross‐modal grounding, mixed‐format workflows, structured tool calling, and temporal video reasoning. Key risks: harder evaluation, more convincing hallucinations, and PII/compliance challenges that may force on‐device or on‐prem inference. Watch for multimodal‐default SDKs, agent frameworks with screenshot/PDF/video support, and domain benchmarks; immediate moves are to think multimodally, redesign interfaces, and add validation/safety layers.

Why Small, On‐Device "Distilled" AI Will Replace Cloud Giants

Published Dec 6, 2025

Cloud inference bills and GPU scarcity are squeezing margins — want a cheaper, faster alternative? Over the past two weeks research releases, open‐source projects, and hardware roadmaps have pushed the industrialization of distilled, on‐device and domain‐specific AI. Large teachers (100B+ params) are being compressed into student models (often 1–3B) via int8/int4/binary quantization and pruning to meet targets like <50 ms latency and <1 GB RAM, running on NPUs and compact accelerators (tens of TOPS). That matters for fintech, trading, biotech, devices, and developer tooling: lower latency, better privacy, easier regulatory proofs, and offline operation. Immediate actions: build distillation + evaluation pipelines, adopt model catalogs and governance, and treat model SBOMs as security hygiene. Watch for risks: harder benchmarking, fragmentation, and supply‐chain tampering. Mastering this will be a 2–3 year competitive edge.